Other Tools and Resources for Music Accessibility

- DSI CLEAR

- Theory & Practice

- Accessibility Online

- Keyboard Navigation

- Headings, Lists & Tables

- Text Formatting & Links

- Images & Alt Text

- Shapes, Texture, & Color Contrast

- Saving Accessible PDFs from Microsoft Office

- Checking & Fixing PDFs for Accessibility

- Fillable PDF Forms

- Audio & Video Captioning

- Publisher & Third Party Content Accessibility Tips

- Content Language Accessibility

- STEM Accessibility

- Music Accessibility

- Relating Score and Sound for Accessibility

- Describing Musical Examples with Text and Alt Text

- Braille Music Resources

- Screen Reader Integration with Notation Software

- MIDI Playback Visualization

- Sonic Analysis Visualization

- Other Tools and Resources for Music Accessibility

- Glossary of Accessibility Terms

- Compliance Checklist

- SensusAccess

- Copyright Guide

- Online Teaching

- UNT Syllabus Template

- UNT Teaching Excellence Handbook

- Teaching Consultation Request

Other Tools and Resources for Music Accessibility

The following entries are additional Accessibility resources that we are researching for use at the University of North Texas College of Music. At their current state, the resources listed here have undergone sufficient field tests to be offered as possible options, but will be expanded and edited based on the findings over the course of our testing/implementation process to determine the best possible applications and situations.

REAPER Digital Audio Workstations and Screen Readers

Areas Assisted:

- Visual Impairment

Possible Applications:

- Composition

- Recording

Depending on the field of study, and in the face of the developing music practices, students may benefit from recording software such as a Digital Audio Workstation (or DAW).

REAPER Accessibility

REAPER is a complete digital audio production application for computers, offering a full multitrack audio and MIDI recording, editing, processing, mixing and mastering toolset. The software is available for a free, fully functional 60-day evaluation period. A license is also available for purchase at a discounted price for personal or educational use.

The REAPER Accessibility Wiki offers an extensive guide for visually impaired users to get the most out of the software with helpful extensions such as OSARA ,SWS, and NDVA. We encourage users to review the Accessibility wiki to better understand the depth of accessibility in REAPER.

Chord Chart Reading with Voiceover in iReal Pro

Areas Assisted:

- Visual Impairment

Possible Applications:

- Rehearsal/Performance

Chord Charts are a common tool for jazz students and performers alike. iREAL Pro (available for IOS and Android) tool for musicians to create, collect, and use their own chord charts for learning and performing music. It can also integrate with the IOS screen reader Voiceover to read chord charts and other musical elements to assist musicians who rely on screen readers. See the following by musician and accessibility advocate Zach Lattin on Using Voice Over Screen reader with IReal Pro Tutorial.

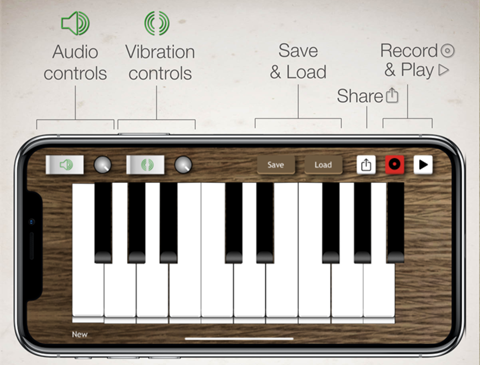

Bone Conduction Keyboard with Music Vibes

Areas Assisted:

- Hard of Hearing

Possible Applications:

- Theory/Analysis/Pedagogy

- Performance (potentially)

Bone Conduction is the process by which sound is conducted to the inner ear through bones of the skull, bypassing the ear canal and middle ear. This process is often explored as a treatment option for certain types of hearing diagnosis. Music Vibes is an IOS application that relies on haptic vibration technology in mobile devices to create a bone conduction effect to translate audio played off of a small app-based keyboard. The keyboard itself has a small pitch range (slightly over an octave) and the nature of the device makes it difficult for traditional performance. However, the haptic bone conduction nature of this application may be useful for certain introductory courses in music.

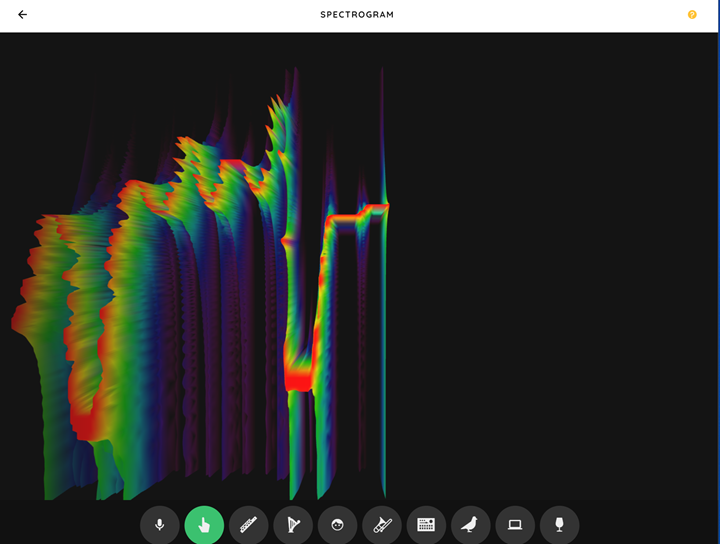

Visual Music Experiments via Chrome Music Lab

Areas Assisted:

- Mobility Issues

- Hard of Hearing

Possible Applications:

- Theory/Analysis/Pedagogy

Chrome Music Lab by Google Chrome offers a number of visually-based hands -on experiments to teach the fundamentals of music. Though designed as for introductory music and focusing on the connections with STEM subjects, the experiments may be a great way for introductory or general education courses due to their versatility.

Some specific experiments that may provide accessible resources are listed below:

Chrome Music Spectrogram

A visual tool to show the spectrogram of sound, with interactivity via built in microphone or tracing the contour via cursor. The visual representation may be useful for the hard of hearing, and the interface may prove useful for students with mobility diagnosis that may restrict instrument playing.

Chrome Music Sound Waves

A tool using a keyboard device that relates pitch to vibrating dots in a grid pattern. As pitch increases, the dotes oscillates faster to illustrate the relationship between pitch and the movement of sound waves. This could prove useful for students with hearing diagnosis to help develop a framework for sound and pitch in music.

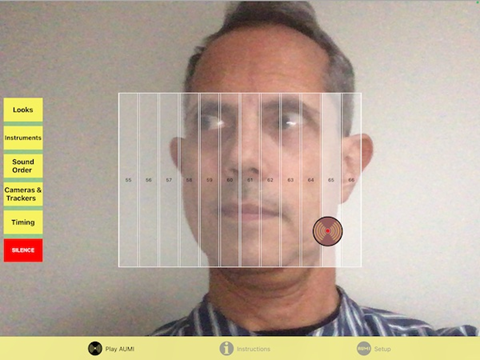

Gesture-based Music via Adaptive use Musical Instrument (AUMI)

Adaptive Use Musical Instruments or AUMI is an ongoing project by the Deep Listening Institute which has developed an interface that enables users to play sounds and musical phrases through movement and gestures, with the original focus on working with children who have profound physical disabilities. Using an IOS device and a camera, performers can interact with a gesture-based screen to play chosen sounds and phrases. Given the initial intent of the application, it may prove very useful for students with motor function diagnosis that may prevent the playing of a traditional instrument.

Zones called “sound boxes” can be established in the interactive screen, which are then assigned notes or other sounds that are played when the camera senses motion over that sound box.

See the AUMI Tutorials page for instructions in multiple languages and formats.